Looking at Us Looking at AI Looking at Us

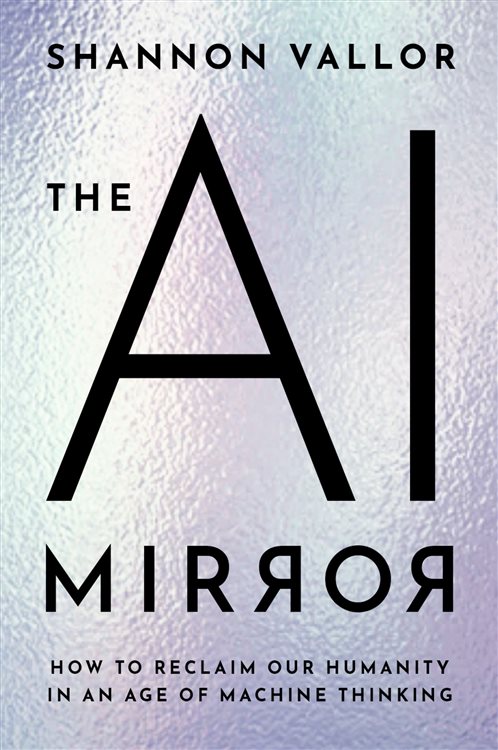

While only in nascent forms, organizing a method for understanding artificial intelligence (AI) as it’s currently structured for broad application is important but daunting. Shannon Vallor, a philosopher and ethicist, suggests using a mirror as a metaphor in her book, The AI Mirror. The book stems from her work as both an academic philosopher and as an AI ethicist at Google.

Vallor describes her book as a “polemic,” and that it is when she says AI “in its dominant commercial form, endangers our humanity.” (p. 4) I’m leaving her polemics aside, mostly. My interest is her use of the mirror as a metaphor for AI, large language models (LLMs) in particular, and how she constructs the metaphor, some of the consequences of AI seen through her metaphor, and some suggestions she makes based on what the metaphor reveals about AI and ourselves.

Reflections on the Mirror Metaphor

The mirror as metaphor for AI came to Vallor based on its content source, that is, us: our words, our computations, our images, our sounds, and all the other digestible forms of our creations. What AI regurgitates from our prompts is based on an algorithmic mixing and matching of the collective creations it ingests.

We often think of a “mirror image” as an exact replica of what faces it, and so the mirror metaphor for AI could convey that AI outputs are true and objective. To the contrary, Vallor hastens to caution, “mirrors do not merely reveal things as they are: mirrors also magnify, occlude, and distort what is captured in their frame. Their view is always both narrower and shallower than the realities they reflect.” Consequently, her metaphor is as much about what “AI mirrors do not show us: what they hide, what they diminish, what humane possibilities for self-engineering are lost in their bright surfaces.” (pp. 13-14)

Throughout the book, Vallor emphasizes how nothing much of the human essence is to be found behind the AI mirrors, especially that which could contribute to AI analytical output.

An AI mirror is not a mind. It is a mathematical tool for extracting statistical patterns from past human-generated data and projecting these patterns forward into optimized predictions, selections, classifications, and compositions…Minds depend upon the brain for their reality…[but] our mental lives are driven by other bodily systems as well: our motor nerves, the endocrine system, even our digestive system. (pp. 38-40)

Vallor extends the metaphor when adding the myth of Narcissus. In the myth, Narcissus catches his image in a pool and becomes so enamored with its reflected beauty he can’t extricate himself before he wastes away into oblivion. Vallor worries that we risk the same fate when AI has us “fixated, confined, immobilized, held captive.” (p. 5) Vallor points to another character from this myth that makes it more poignant yet. Echo, a nymph, only parrots the last few words she’s heard. In applying Echo’s trait to AI, Vallor contrasts how Echo returns the words she has taken in while “a large language model returns to us not our own recent utterances, but a statistical variation on the collected, digitized words of untold millions.” (p. 34) This trait of large language models underlies some of the consequences the metaphor reveals.

Truth of Consequences

The metaphor helps us see that what we get from AI results is based on statistical probabilities of words occurring in a certain order without regard to how true any of it is. So, to Vallor, AI models are “like the human bullshitter. They aren’t designed to be accurate—they are designed to sound accurate.” (pp. 120-122) She does not use the term bullshit blithely. She refers to Harry Frankfurt’s treatise on the subject in which he distinguishes bullshit from lying by its indifference to the truth and its indiscriminate application. AI users often see this consequence in the form of the erroneous answers they get, which are referred to colloquially as “hallucinations;” Vallor calls them, “fabrications.” These consequences range from humorous and annoying to unproductive and deadly.

As the AI source for content is mainly us, Vallor warns it’s not all of us who contribute content, but rather just a small subset of us, and certainly not a subset of us that is representative of the human race in all its variations. Referring to her metaphor, then, a mirror cannot reflect what it cannot see, which produces consequences around what we can expect from AI predictions. The complete reliance on what it has seen limits its predictions to what can be inferred from the historical data that exists and it can reach, “what humans valued enough to describe or record in data. But not all humans.” (p. 133) And, with no input from broad-based human experience, wisdom, imagination, and purpose, she wonders how we can have any “hope of making ourselves more than what we have already been.” (pp. 90-91) There’s nothing new under the mirror.

The consequences of AI Vallor details that are polemical concern the commercial entities controlling what the mirror sees and what it shows. In particular, given the weakening of regulatory functions over the previous decades, she sees how “the rise of the behemoths of ‘Big Tech’” has transformed them “from intermediaries of social power to its primary executors.” (p. 175)

All this to Vallor “is a calamity—a betrayal of life and its possibilities,” (p. 36) but she does not go so far as to say we’re doomed. She allows how huge benefits could accrue to societies and individuals if the AI mirrors become more reliable and objective sources of information, that is, amidst a pile of bullshit, the optimist in her is saying there must be a calf in it somewhere. She offers suggestions on how we unearth the calf.

How to be the Fairest of them All

Vallor’s suggestions for managing AI applications call upon the wherewithal of users for now—DIY quality control required. Many of her suggestions center on comprehending how AI output is constructed and accounting for the implications of that construction.

When we do catch sight of our past in the AI mirror, it is essential that we do not mistake those patterns for destiny or allow them to become self-fulfilling prophecy…If we mindlessly replicate what we see in the mirror of history, we will never build upon that knowledge, never be free to try new and better approaches. (p. 101)

She speaks of knowing “data provenance,” and of knowing when “algorithmic predictions and profiles are scientifically credible, ethically justifiable and politically accountable, and when they are not.” (p. 52) Although that may seem like asking a lot from even advanced AI users, we need to go through the same processes for our own analyses. And from these analyses, we also at times produce hallucinations, distortions, and slanted results. In this way to a degree, the AI mirror metaphor applies to humans. But we can incorporate aspirations, imagination, wisdom, and morals into our analyses. That differentiation is key to seeing how Vallor’s AI mirror metaphor situates AI as a “powerful amplifier of human ability,” not as a substitute for it. (p. 28)

Vallor S. The AI Mirror. Oxford: Oxford University Press, 2024.

Frankfurt H. On Bullshit. Princeton: Princeton University Press, 2005.

Web image by Medhum.org.